The GPUs running AI workloads today were designed to render video game graphics. The programming languages agents use were built for human readability. The development processes — sprint cycles, code reviews, deployment windows — are all structured around human working hours and attention spans.

Despite these constraints, AI agents are already building C compilers from scratch for roughly $20,000 in compute costs and consistently outperforming average human engineers on standard tasks. When purpose-built infrastructure arrives, following the same vertical integration pattern we saw transform smartphones, the cost and capability equation changes dramatically. It’s the natural evolution of any technology platform.

The smartphone parallel: five layers of integration

Early smartphones ran on repurposed mobile phone hardware with desktop-derived operating systems. They worked, but the architecture was borrowed. Then came the integration layers, each delivering step-change improvements:

Layer one: Purpose-built mobile operating systems — iOS and Android, optimised for touch interfaces and mobile constraints rather than adapted from desktop paradigms.

Layer two: Custom silicon designed specifically for mobile workloads. Apple’s A-series chips weren’t just smaller desktop processors; they were architected from the ground up for mobile use cases.

Layer three: Specialised programming frameworks — Swift and Kotlin — designed for mobile development patterns rather than ported from other contexts.

Layer four: Cloud-native architectures that assumed mobile-first applications, not desktop apps squeezed onto smaller screens.

Layer five: An entire ecosystem of services built around mobile devices as the primary platform, from payments to authentication to location services.

Each layer compounded the improvements of the previous one. The difference between a 2007 iPhone and a 2015 iPhone wasn’t just better specs — it was a fundamentally different platform enabled by vertical integration.

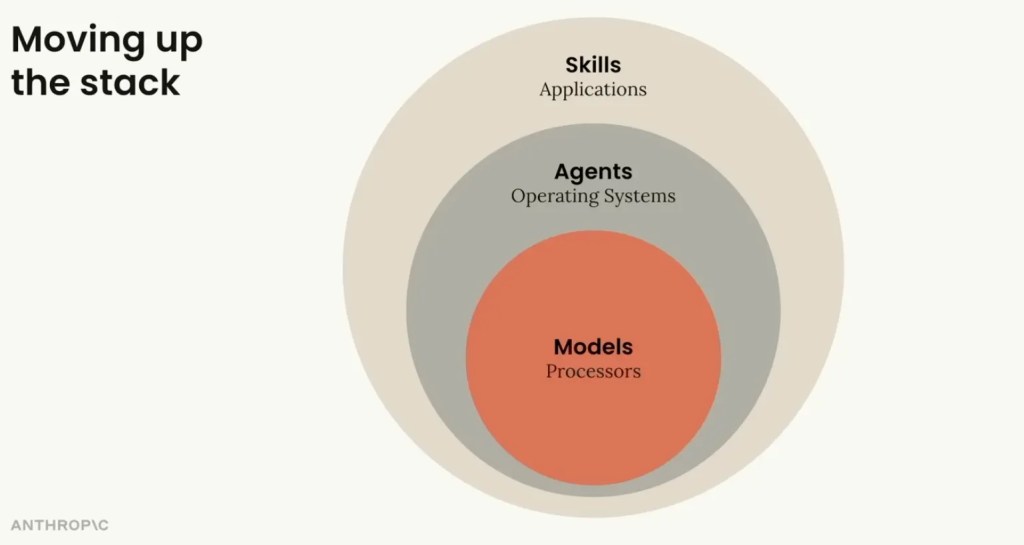

The AI stack is entering the same progression.

What purpose-built looks like

Custom AI chips are already in development. Google’s TPUs, various ASICs, and well-funded startups are working on silicon designed for neural network operations rather than graphics rendering. These aren’t incremental improvements on Nvidia’s architecture — they’re different approaches optimised for different workloads.

Programming paradigms are shifting from human-oriented languages to frameworks designed for agent workflows. AI agents don’t need readable variable names or structured flow control designed for human comprehension. They work with tokens and can operate on representations optimised for their own processing, not ours.

Development processes are being redesigned around agent capabilities. Continuous, asynchronous, parallel-by-default workflows rather than sequential sprints bounded by human availability. The infrastructure assumes agents are the primary operators, with humans in supervisory roles.

Aaron Levie maps the emerging stack: agent-specific sandboxed compute environments (E2B, Daytona, Modal, Cloudflare), agent identity and authentication systems, agent-native file storage and memory, agent wallets for microtransactions (Stripe, Coinbase), and agent-optimised search (Parallel, Exa). These aren’t conceptual products — these companies exist and are building now.

His framing of “make something agents want” — riffing on Paul Graham’s “make something people want” — captures the shift. Software is being redesigned with agents as the primary user. Every feature needs an API. Every service needs an MCP server. If agents can’t sign up and start using your product autonomously, you’re invisible to the next generation of software consumers.

The platform convergence is worth watching. Code repositories are evolving from version control into the orchestration layer for the entire software development lifecycle. Whoever controls that layer controls the persistent context that makes agents effective across sessions. The major AI labs are moving toward owning this layer, which suggests the future stack isn’t “AI model plus separate dev tools” but an integrated platform where context, runtime, and code repository collapse into one thing.

A parallel convergence is happening at the operator level: always-on agents you communicate with through mainstream channels like messaging apps or email rather than terminals or dashboards. The human doesn’t need specialised tooling — they need a channel to the agent that handles everything downstream.

Combine the GUI agent environment with the always-on operator agent and the platform layer, and you get a full vertical integration play — from software development lifecycle through to production operations, under one roof. This is the smartphone moment for developer tooling: separate apps collapsing into an integrated platform.

What changes when the stack is purpose built

The trajectory over the last few years has been clear: model capabilities improve while cost per unit of useful output drops. But today’s pricing is misleading — current subscription tiers are heavily subsidised, much like AWS in 2006. AI companies are burning cash to establish market share. The real story isn’t simply “it gets cheaper.” Provider economics improve toward sustainability while capabilities compound for users. Both curves move in the right direction, but assuming today’s prices reflect true costs is a mistake in either direction.

Where the stack is unevenly developed matters more than the overall cost trajectory. Code generation has leapt ahead, but DevOps, production support, and operational reliability are further behind — areas where consequences of AI errors are immediate and expensive. The bottleneck isn’t capability. General-purpose coding agents can already manage infrastructure via CLI and APIs. The bottleneck is the trust boundary: giving an agent access to production systems and customer data raises security concerns that don’t exist in a sandboxed branch. Specialised DevOps agents are emerging to address this, but they’ll likely follow the familiar platform-shift pattern — absorbed into general-purpose agents once the sandboxing layer matures.

There’s an irony in what AI is automating first: not the creative work developers love, but the process work that fell through the cracks as agile teams prioritised working software over documentation. The manifesto was right that heavyweight documentation was wasteful — but in practice, teams swung too far the other way, under-documenting, under-testing, and accumulating technical debt. Agents don’t have that bias. They’ll write tests and documentation with the same attention as feature code.

What this means now

Mainstream custom AI chips are a few years out. AI-native frameworks, possibly even shorter. Organisational redesign is the slowest layer — cultural, not technical.

None of that is a reason to wait. Dark factory teams are already running production workflows on the borrowed stack — and the gap between them and companies still debating AI adoption compounds monthly. Every month of building expertise on today’s imperfect infrastructure is learning that transfers directly to the purpose-built era.

The early smartphone era produced the apps, habits, and companies that dominated the next decade. We’re in the equivalent moment for AI. The stack will improve. The question is whether you’ll be positioned to take advantage of it.