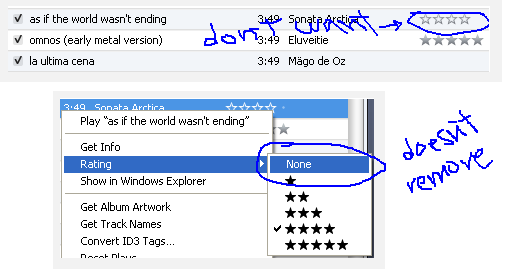

I use the iTunes ratings quite extensively for organizing my music collection with smart playlists. Of late I started seeing some songs I had never rated in my smart playlists and they show up with grey stars in iTunes that can’t be removed from the song directly. On the iPod/iPhone/iPad it’s even worse as they show up as normal ratings making you wonder how your library got messed up.

Turns out that it was apparently due to the album ratings being applied automatically to the songs. In fact, this feature has been around for a while, but one of the recent iTunes updates seems to have created ratings for albums on its own and messed things up. Fret not, as there’s a simple solution – just go to the albums view and remove the album rating. Any songs manually rated in the album don’t get affected of course.

Source: How to remove the automatic ratings from songs in iTunes? – Ask Different